Textual Analysis & Technology: Information Overload, Part II

Post by Kyra Hunting, University of Kentucky

This post is part of Antenna’s Digital Tools series, about the technologies and methods that media scholars use to make their research, writing, and teaching more powerful.

![]() In my last post, I discussed how I stitched together a system built primarily from simple office spreadsheet software to help me with the coding process used in my dissertation. As I moved into my first year post-diss with new projects involving multiple researchers and multiple kinds of research (examining not only television texts but also social media posts, survey responses, and trade press documents), I realized that my old methods weren’t as efficient or as accessible to potential collaborators as I needed them to be. This realization started a year’s worth of searching for a great software solution that would help me with the different kinds of coding that I found myself doing as I embarked on new projects. While I ultimately discovered a number of great qualitative research software, ultimately nothing was “just right.”

In my last post, I discussed how I stitched together a system built primarily from simple office spreadsheet software to help me with the coding process used in my dissertation. As I moved into my first year post-diss with new projects involving multiple researchers and multiple kinds of research (examining not only television texts but also social media posts, survey responses, and trade press documents), I realized that my old methods weren’t as efficient or as accessible to potential collaborators as I needed them to be. This realization started a year’s worth of searching for a great software solution that would help me with the different kinds of coding that I found myself doing as I embarked on new projects. While I ultimately discovered a number of great qualitative research software, ultimately nothing was “just right.”

The problem with most of the research-oriented software I found was that they are based on at least one of two assumptions about qualitative research: 1) that researchers have importable (often linguistic, text-based) materials that we are analyzing and/or 2) we know what we are looking for/hoping to find. Both of these assumptions presented limitations when trying to find the perfect software mate for my research.

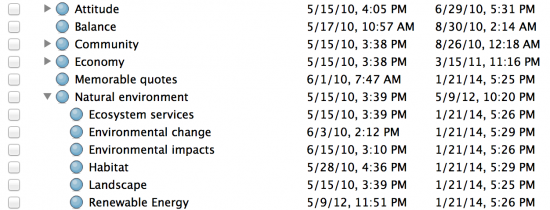

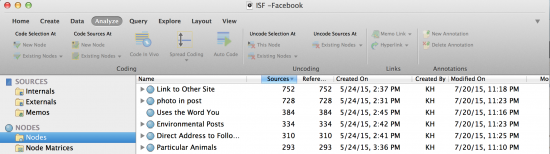

The first software I tried was NVivo, a qualitative research software platform that emphasizes mixed media types and mixed methods. This powerful software was great in many ways, not the least of which was that it counted for me. I first experimented with NVivo for a project I am doing (with Ashley Hinck) looking at Facebook posts and tweets as a site of celebrity activism, and in this context the software has acquitted itself admirably. It allowed me to import the PDFs into the system and then code them one by one. I found the ability to essentially have a pull-down list of items to consider very convenient, and I appreciated that I could add “nodes” (tags for coding) as I discovered them and could connect them to other broader node categories.

The premise behind my dissertation had been to set up a system to allow unexpected patterns to emerge through data coding and I had wanted to import this into my new work. NVivo supports that goal well, counting how many of the 1,600+ tweets being coded were associated with each node and allowing me to easily see patterns emerge in terms of which codes were most common and which were rare. An effective query system allows researchers to quickly find all the instances of any given node (e.g., all tweets mentioning a holiday) or group of nodes (e.g., all tweets mentioning a holiday and including a photo). While the format of my data meant I wasn’t able to use Nvivo’s very strong text-search query, its capability to search for text within large amounts of data, including transcripts, showed great potential. NVivo seemed to be the answer I was looking for, until I tried to code a television series.

For social media, my needs had actually been relatively simple. I was simply marking if any one of a few dozen attributes were present in relatively short social media posts. But with film and television they increased. It wasn’t as simple as x, y, or z being present, but rather if x (say physical affection) was present I also needed to be able to note how many times, between which people, and to add descriptive notes. This is not what NVivo is built to do. NVivo imagines that researchers are doing three different things as distinct and separate steps (coding nodes, searching text, and linking a single memo to a single source). NVivo is great at doing these things and I expect will continue to serve me well working with survey and text-based data. But for the study of film and television shows I found NVivo demanded that I simplify the questions I asked in ways that were inappropriate. After all, in the complex audio-visual-textual space of film and television it isn’t just that a zebra is present but whether it is live-action, animated, talks, dances, how many zebras are around it, what sound goes with it, etc. Memos allowed you to add notes but it only allowed one memo per source and the memos were awkwardly designed and hard to retrieve alongside the nodes.

I found that NVivo competitor Dedoose gave me a bit more flexibility in terms of the ways I could code but it did not do well with my need to simply add episodes as codable items. I was unable to import the episode. Also, simply typing in an episode’s title and coding as I watched was much harder than I expected. Like NVivo, Dedoose seems to imagine social scientists that work with focus groups, surveys, oral histories, etc. as their primary market. Trying to use Dedoose without having an existing spreadsheet or set of transcripts to upload proved unwieldy. In the analysis of film and television, coding while you are collecting data is possible, even desirable, and the notion that data collection and the coding of data would be two separate acts was built into this system.

If Dedoose’s limitation was the notion of importable data, Qualtrics’ was the notion that I would have already decided what I would find. I quickly discovered that while Qualtrics was wonderful at setting up surveys about each episode and effectively calculated the results, it did not facilitate discovery. If, for example, I wanted to code for physical affection and sub-code for gentle, rough, familial, sexual, it could manage that well. But if I wanted to add which characters were involved, this too needed to be a list to select from. I couldn’t simply type in the characters’ names and retrieve them later. Imagine the number of characters involved in physical affection over six years of a prime-time drama and you can see why a survey list (instead of simply typing in the names) would quickly become unwieldy.

That is how I found myself falling back on enterprise software; this time the database software FileMaker Pro. FileMaker Pro doesn’t do a lot of things. It doesn’t allow you to search the text of hundreds of word documents, it doesn’t visualize or calculate data for you, it doesn’t automatically generate charts. But what it does do is give you a blank slate to put the variety of types of information you need into each database and helps you create a clear interface for inputting this information. Would I like to code using a set of check boxes indicating all the themes that I have chosen to trace in a given episode? No problem! Need a counter to input the number of scenes in a hospital or police station? Why not?! Need to combine a checkbox with a textbox so I can both note what happened and who it happened to? Sure! And since it is a database system, finding all of the episodes (entries) with the items that were coded for is simple and straightforward. This ability to not only code external items but to code them in multiple ways for multiple types of information using multiple input interfaces proved invaluable. As did its ability to allow me to continue coding on an iPad as well as a laptop, which allowed me to stream video on my computer at work or while traveling and coding simultaneously.

FileMaker Pro has its limitations, too. It does not connect easily with other coders unless everyone has access to the expensive FileMaker Server, and since I have just begun using FileMaker I may find myself still paying for a month of Dedoose here and there to visualize data I collected in FileMaker or importing the notes from my database into NVivo to make a word tree. But at the end of the day what characterizes textual analysis is its interpretive qualities. The ability to add new options as you proceed, to combine empirical, descriptive, numerical, linguistic and visual information, and to have a platform that evolves with you is invaluable.

While I didn’t find the perfect software solution, I found a lot of useful tools and I discovered something important: As powerful as the qualitative research software out there currently is, no software currently is well suited to textual analysis. The textual analysis that media studies researchers do creates unique challenges. While transcripts of films and television shows can be easily imported (if they can be obtained), the visual and aural elements of these texts are essential and so many researchers in this area will want to code items without importing them as transcripts into the software. Furthermore, the different ways to approach media – counting things, looking for themes, describing aesthetic elements – necessitate the ability to have multiple ways to input and retrieve information (similar to Elana Levine‘s discussion about incorporating thousands of sources in multiple formats for historiographical purposes). The potential need to have multiple people coding television episodes or films requires a level of collaboration that is not always easily obtained outside of social-science-oriented software like Qualtrics. Early film studies approaches often combined reception with description and these two actions remain important in contemporary textual analysis. Textual analysis requires collecting, coding, analyzing, and experiencing simultaneously (particularly given the difficulties in going back to retrieve a moment from hours and hours of film or television). It is an act of multiplicity, experiencing what you watch in multiple ways and recording the information in multiple ways, that current software does not yet facilitate. The audio-visual text requires a different kind of software, one that does not yet exist, one that would not only allow for all these different kinds of input and analysis but also allow you to easily associate codes with timestamps, count shots, or scene lengths and link them with themes. While the perfect software is not out there, I found that combining software like Filemaker Pro, NVivo, Dedoose, and simple tools like Cinemetrics could still help me dig more deeply into media texts.

Hi Kyra, Jason from Dedoose here. Thank you for writing this article, we are always trying to examine and experience other workflows to help enable researchers. In that regard we would love it if we could have a chat with you to better understand exactly how Dedoose did not fit this need, and formulate a plan on how to improve Dedoose in the future to address this. Right off the bat it seems you could have made the video a single excerpt to apply codes to the video, as well as excerpts for portions of video for specific actions. For counting things you can use weighted codes, or descriptors. Additionally memos can be linked to media, and all other items in dedoose, and can be linked to a specific moment in the video. Either way we would love to work with you to make sure Dedoose is a good fit for collaborative qualitative or mixed-methods research. Thank you again.

~ JT